Automotive ADAS

The technology for Advanced Driver Assistance System (ADAS) continues to evolve, largely driven by automotive regulations and consumer demand. ADAS helps drivers stay on top of the driving surroundings for an easier, safer and more comfortable ride. ADAS is the essential step between initial Driver Assistance (DA) systems and fully autonomous cars, and typical solutions include various digital sensors such as RADAR, LIDAR and digital CMOS cameras to capture, fuse and process data from the vehicle driving environment. The popular ADAS camera-based functions are:

- Multi-camera 360-degree surround-view

- Rear-view camera parking assistance

- Lane Departure Warning (LDW) – based on line detection algorithm, informs driver about unintentional road lane departures

- Pedestrian Detector (PD) – based on object detection algorithm, configured to detect pedestrians in a front of a vehicle

- Forward Collision Warning (FCW) – based on object detection algorithm, configured to detect multiple vehicles in a front of driving path

- Traffic Sign Recognition (TSR) – based on object detection and classification algorithms to detect and recognize traffic signs from the vehicle environment

|

|

| Figure 1: TySOM-3-ZU7EV | Figure 2: FMC-ADAS expansion card |

As ADAS technology continues to evolve, the need for high-performance re-programmable platforms has never been greater. Aldec provides ADAS development platforms including reference designs and tutorials based on the TySOM Embedded Development boards and FMC-ADAS extension card.

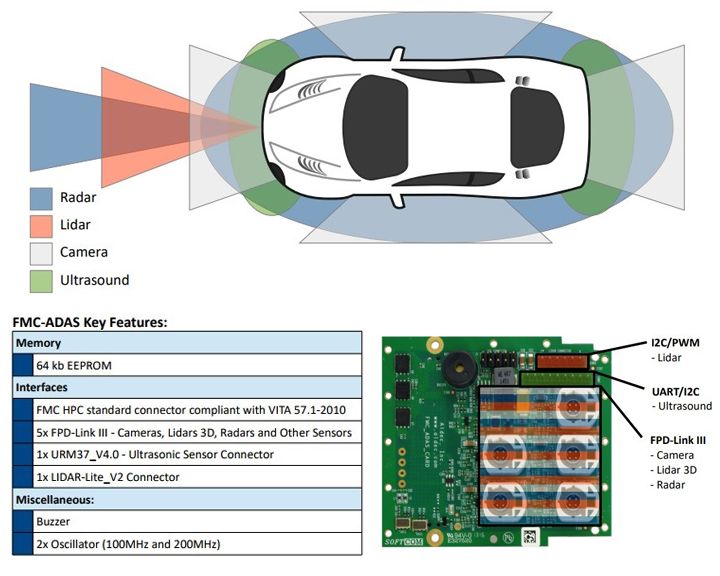

Figure 3: FMC-ADAS functionalities

The ADAS application contains the following features:

Figure 4: ADAS solution processing detail

Main Features

- One Embedded development board (TySOM-3-ZU7EV/TySOM-2-7Z100/TySOM-2-7Z045/TySOM-2A-7Z030) and FMC-ADAS Hardware Platform

- Dual-core/Quad-core ARM Cortex-A9 APU & 1GB DDR3 memory

- Programmable Logic (PL) for custom hardware accelerators and peripheral controllers

- On-board connectors for Human Interface Devices (HID): USB3.0,USB2.0, Ethernet, HDMI, QSFP+, Wi-Fi/Bluetooth

- FMC-ADAS with 5 camera connectors based on DS90UB914Q deserializers, ultrasonic and LIDAR sensor connectors and a buzzer device

- X4 automotive wide lens 192-degree HDR Blue Eagle cameras and 1x 52-degree

- Multi-Camera Surround View, Driver Drowsiness Detection and Smart-rear view reference designs including hardware, firmware and software parts

- Embedded Linux OS with command line interface over HDMI screen

- V4L2 compatible implementation of Video Input Port (VIP) camera interfaces

- HW/SW support for ultrasonic sensor

- HW/SW support for buzzer device

- DRM compatible implementation of HDMI output interface

- Linux user application for simultaneous 4 cameras streaming, edge detection and frames merging with critical parts accelerated using Xilinx SDSoC tool

Solution Contents

- One Embedded development board (TySOM-3-ZU7EV/TySOM-2-7Z100/TySOM-2-7Z045/TySOM-2A-7Z030) with FMC-ADAS extension card and Riviera-PRO Advanced RTL Simulation/Debugging Platform (optional)

- Technical documentation, tutorials and white papers

- Multi-Camera Surround View, Driver Drowsiness Detection and Smart-rear view Reference designs Quick Start Guide

- Multi-Camera Surround View, Driver Drowsiness Detection and Smart-rear view Reference design binaries

- SDSoC hardware platform package (Board Support Package - BSP)

- Blue Eagle camera Linux device drivers source code

- Linux userspace application source code

- 1-year voucher for Xilinx Vivado Design Suite (latest version)

- 1-year voucher for Xilinx SDSoC tool (latest version)

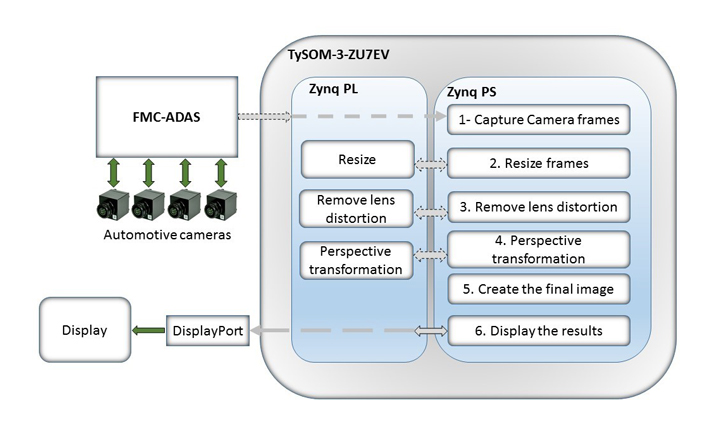

Bird's Eye View

The bird’s eye view is a vision monitoring system used in automotive ADAS technology that provides the 360-degree, top-down view. The main benefits of this system is to assist the driver in parking the vehicle safely. However, it can be used for lane departure and obstacle detection. Aldec has designed and created a bird’s eye view application to help ADAS designers. This application is implemented using the TySOM-3-ZU7EV embedded development board, an FMC-ADAS daughter card and four blue eagle cameras, each with 192-degree wide lenses running at 30fps. The hardware setup and the output are shown in the following image.

The most computational intensive parts of the code are offloaded from ARM Cortex-A9 to FPGA part of Xilinx® Zynq Ultrascale+ MPSoC device using Xilinx SDSoC™ tool, achieving the goal for real-time processing performance. Design process of this demo consists of 6 stages which are done using FPGA and the ARM processor as follows:

- Frame capturing (ARM processor)

- Frame resizing (FPGA)

- Remove lens distortion (FPGA)

- Perspective transformation (FPGA)

- Create the final image (ARM)

- Display the results (ARM)

The following diagram shows the detail of this implementation.

Multi-Camera Surround View

ADAS Multi-Camera Surround View technology is a parking assistance system available in today’s mid- and high-cost vehicles. The key feature is a set of 4 HDR wide-lens cameras installed around the vehicle for a full 360 degree view of the surroundings in a single screen. The reference design grabs, processes and displays 4 simultaneous camera video streams in real-time. The most computational intensive parts of the code are offloaded from ARM Cortex-A9 to FPGA part of Xilinx® Zynq-7000 All-Programmable and Xilinx® Zynq Ultrascale+ MPSoC device using Xilinx SDSoC™ tool, achieving the goal for real-time processing performance. The accelerated part includes edge detection, colorspace conversion and frame merging tasks. The Edge detector is used to highlight the possible obstacles around the vehicle which cannot be easily noticed by the human eye.

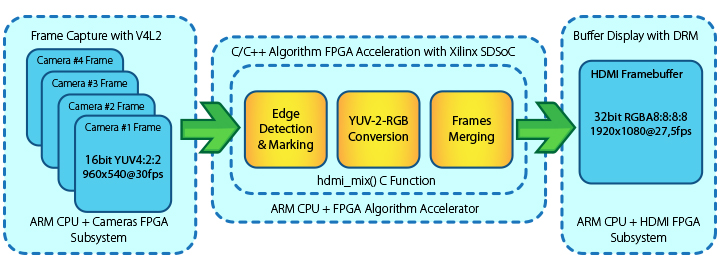

Figure 5: Multi-camera surround view processing detail

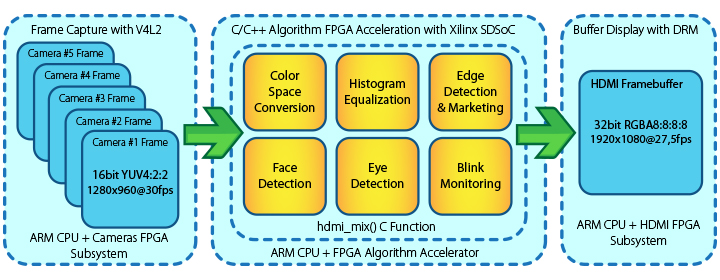

Driver Drowsiness Detection

Most of the car accidents nowadays occur because of the drowsiness of the driver, specially truck drivers. Nowadays, to prevent such a collision, a specific camera is used in smart vehicles to realize driver drowsiness in the early stages. Aldec’s ADAS solution also contains this feature by using one HDR camera allocated for the driver. The Pixel Intensity Comparison-based Object detection (PICO) algorithm is used for this application because of high processing speed and ease of modification. In this application, the most computational intensive parts of the code are offloaded from ARM Cortex-A9 to FPGA part of Xilinx® Zynq-7000 All-Programmable and Xilinx® Zynq Ultrascale+ MPSoC device using Xilinx SDSoC™ tool, achieving the goal for real-time processing performance. The accelerated parts contain colorspace conversion and Histogram Equalization. In the next step, face detection, eye detection and blink detection are processed. In the decision making section, a buzzer comes to play if the driver is detected to be sleepy. The following diagram shows the steps for the Driver Drowsiness Detection Application.

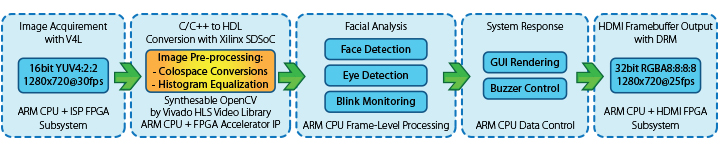

Figure 6: Driver Drowsiness Detection processing detail

Smart Rear-View Camera

Today’s modern vehicles include a rear-view camera as a basic feature which allows drivers to monitor the rear of the vehicle during reverse driving. Automotive grade megapixel HDR camera, ultrasonic sensor and the Human Interface Devices (HID) are the essential components that turn the rear license plate camera into a smart solution. The reference design is running on the TySOM-2 board under the control of embedded Linux OS, providing an easy way to collect, fuse and process multiple simultaneous data from several digital sensors using the best from ARM Processing System (PS) and the Programmable Logic/FPGA (PL) sides located on Xilinx Zynq-7000 All-Programmable device. The processed data is used to overlay the rear-view video stream with the visual warnings as well as for sonic alert generation in case of a possible obstacle close to the vehicle.

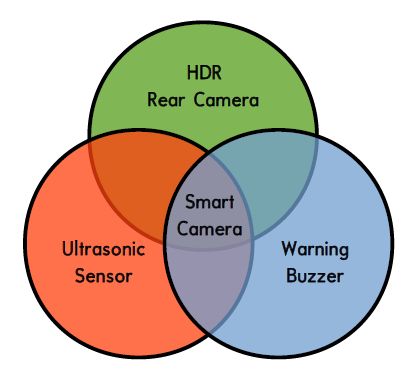

Figure 7: Smart rear-view processing details

Corporate Headquarters

2260 Corporate Circle

Henderson, NV 89074 USA

Tel: +1 702 990 4400

Fax: +1 702 990 4414

https://www.aldec.com

©2026 Aldec, Inc.