FPGA-based NVMe Data Storage

Introduction

Non-Volatile Memory Express (NVMe) protocol was introduced in 2012 to replace the old-fashioned SATA Solid State Drives (SSD). The main purpose is to avoid the existing data path bottlenecks and achieve greater performance for low-latency and high bandwidth data communication with the current generation of NAND flash memory. Considering the underlying memory architecture, NVMe exploits NAND memory parallelism and leverages standard high-speed PCIe serial bus to provide gigabyte-per-second (GBps) linear I/O throughput, as well as tens of microseconds latency for random 4K data accesses.

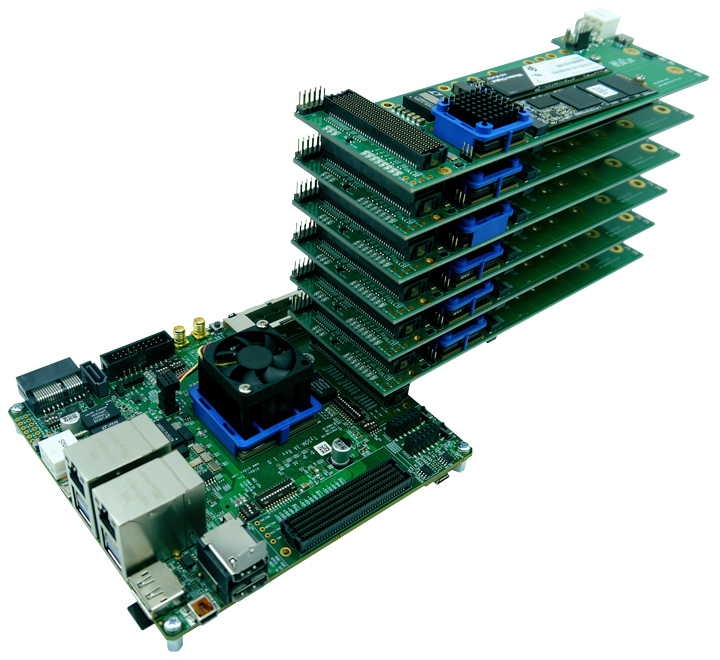

Over the last few years, FPGAs have shown a solid performance in data center and cloud acceleration applications where NVMe plays an important role. In this regard, Aldec has produced the FMC-NVMe expansion card for prototyping and emulation platforms. This board delivers high-bandwidth, low-latency data storage that is highly required in applications such as High Performance Computing (HPC), High Frequency Trading (HFT), machine learning, data centers and any application with fast R/W and high memory bandwidth requirement. The FMC board is stackable and offers support for x4 NVMe SSDs in M.2 form factor via high-performance PCIe switch. Up to eight daughter boards, which is up to 32 NVMe SSD, can be stacked to one FMC connector on the carrier card. To pave the path for the developers who are using this board, Aldec has prepared a reference design using Aldec TySOM-3A-ZU19EG and TySOM-3-ZU7EV embedded development boards. In this design, 8x FMC-NVMe card are stacked on each other which provides 32 NVMe SSDs.

Figure.1 shows the hardware setup for this solution.

Reference Design Description

The approach of NAND flash acceleration via PCIe interface used in NVMe protocol is not the first industry attempt to benefit from PCIe transfer speeds in data storage devices. But what made NVMe so successful is a direct connection between NAND controller and host processor without unnecessary data protocol translations. NVMe is built upon x4 gen3 (8 Gb/s) PCIe lanes connection, which results in 32 Gb/s of theoretical maximum data transfer rate. Aldec TySOM-3/3A embedded prototyping boards are based on Xilinx Zynq UltraScale+ MPSoC technology, which incorporates high-performance application processor (APU) with DDR4 system memory controller and hardened configurable PCIe gen3 blocks in the programmable logic part. These features enable these boards to implement a rich-featured Root Port of PCIe Root Complex solution to meet the underlying physical layer requirements of NVMe protocol.

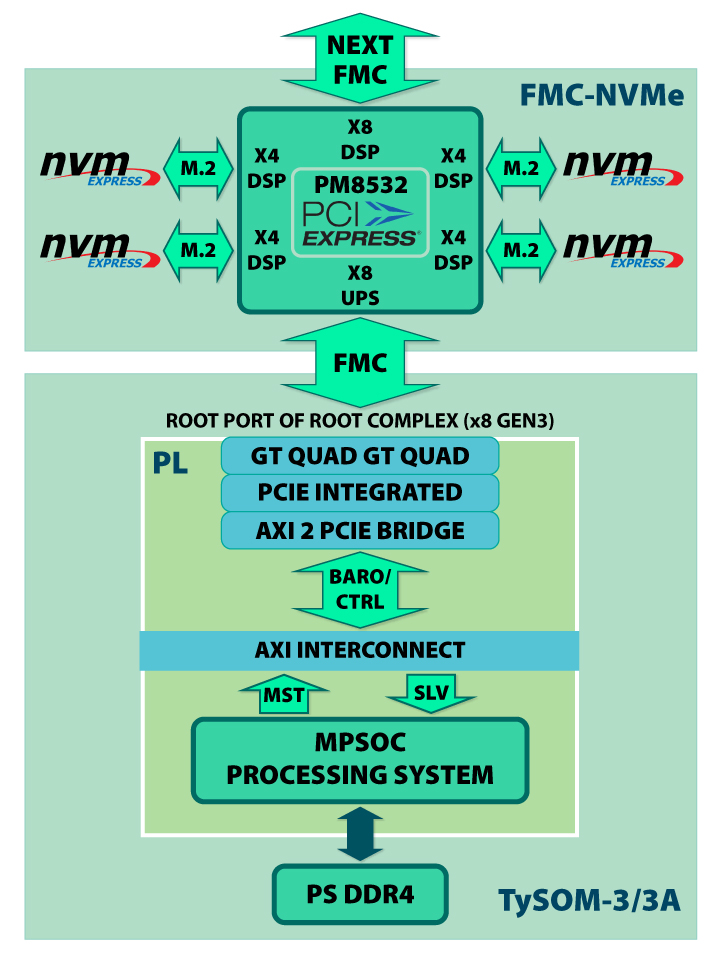

The PCIe Root Complex is configured for x8 gen3 lanes and is connected to the 32-lane Microsemi PM8532 PCIe switch available on FMC-NVMe daughter card via high-pin count (HPC) FMC connector (FMC1). The PM8532 switch is responsible for interfacing with x4 NVMe SSDs connected to 4-lane M.2 connectors. The rest 8 lanes are routed to the top FMC connector to be connected with the upstream FMC-NVMe card in a stack. Overall system topology is shown in Figure 2. The reference design is running under control of embedded Linux OS, which includes Xilinx pcie-xdma-pl driver for PCIe Root Complex subsystem as well as mainline nvme driver for NVMe protocol support.

Figure 2: Reference Design System View

Main Features

- Zynq UltraScale+ MPSoC based TySOM development board;

- Up to x8 FMC-NVMe daughter cards in a stack;

- NVMe SSDs in M.2 form factor from OEM vendors (up to x32 drives total per host).

Solution Contents

- TySOM-3-ZU7EV or TySOM-3A-ZU19EG embedded prototyping board;

- FMC-NVMe daughter card (up to x8 in a single stack);

- Technical documentation;

- PM8532 PCIe switch configuration file and flash image;

- Reference design source files and binaries;

- Example scripts for bandwidth and latency benchmarks.

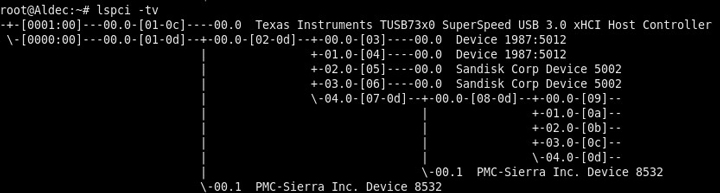

The successful link-up for all PCIe switch ports can be verified by Microsemi ChipLink environment via debug UART connection (configuration with at least x2 FMC-NVMe cards in a stack required to occupy all the ports of one switch), as well as with Linux lspci tool. Moreover, link-up status for every port is routed to the user LEDs available on FMC-NVMe and can be checked visually. The PCIe topology from Linux point of view for test configuration previously discussed is shown in Figure 3.

Figure 3: PCIe Topology

Performance Statistics

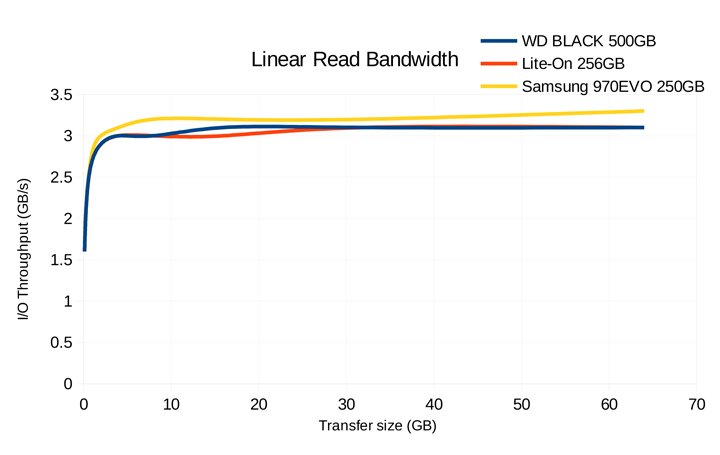

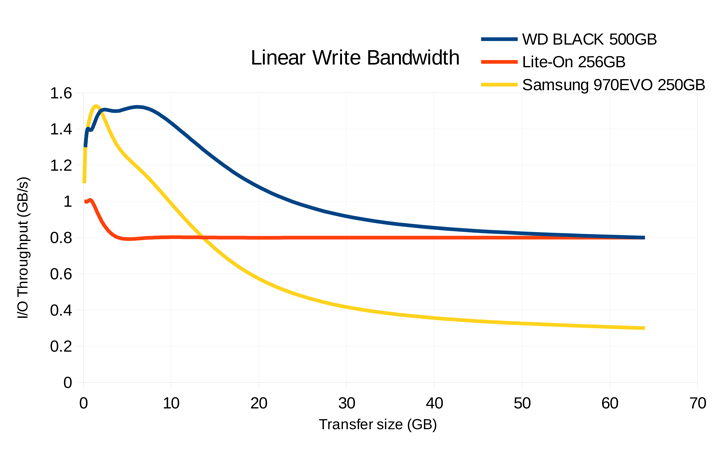

In order to estimate the performance of reference design, the series of low-level I/O benchmarks were performed in Linux user space using standard dd command line utility. The charts shown in Figures 4-5 depict the dependency of I/O throughput against the different sizes of data transfer. Note, the DIRECT_IO approach was used to avoid Linux VFS stack overhead. Read characteristics show the approximately linear dependency between data transfer speed and data transfer size with its peak value at 3.3 GB/s. In case of write transfers, an every tested NVMe SSD has its own area of peak performance (1.0 – 1.5 GB/s) due to the different sizes of high-speed SLC NAND memory implemented as write cache, when the most part of data storage is made using slower TLC NAND memory parts.

Figure 4: Single SSD Read Bandwidth

In case of simultaneous data transfers to the multiple SSDs (x4 SSDs attached to the same FMC-NVMe daughter card), the results show the bandwidth increase to 4 GB/s total for linear read and up to 3.4 GB/s total for linear write operations accordingly.

Additionally, 4K random block access latency measures were performed using Linux user space nvme-cli NVMe management interface to evaluate the IOPS metrics, which are in range of 35-50 us for write and 70-125 us for read. Note, that every additional FMC-NVMe card in a stack introduces about 1-2 us latency overhead.

Figure 5: Single SSD Write Bandwidth

Conclusion

Introduced several years ago and massively approved recently, NVMe protocol allowed to get closer to the maximum NAND flash memory latency and throughput capabilities in the data center and consumer electronics storage devices. Assuming the fact that NAND technology will be evolving in the next few years, NVMe protocol will be still evolving as well, providing even better speed characteristics and features. Aldec delivers its FMC-NVMe expansion card for FPGA-based emulation and prototyping solutions to benefit from NVMe technology in data storage acceleration applications right now. For more information on accessing this solution for your prototyping/end user project, please contact us at sales@aldec.com

Corporate Headquarters

2260 Corporate Circle

Henderson, NV 89074 USA

Tel: +1 702 990 4400

Fax: +1 702 990 4414

https://www.aldec.com

©2026 Aldec, Inc.